Based on original research combining a B2B survey of localization, engineering, product, and security professionals, Reddit community discussions, and publicly available industry data.

The question is no longer “whether” but “how”.

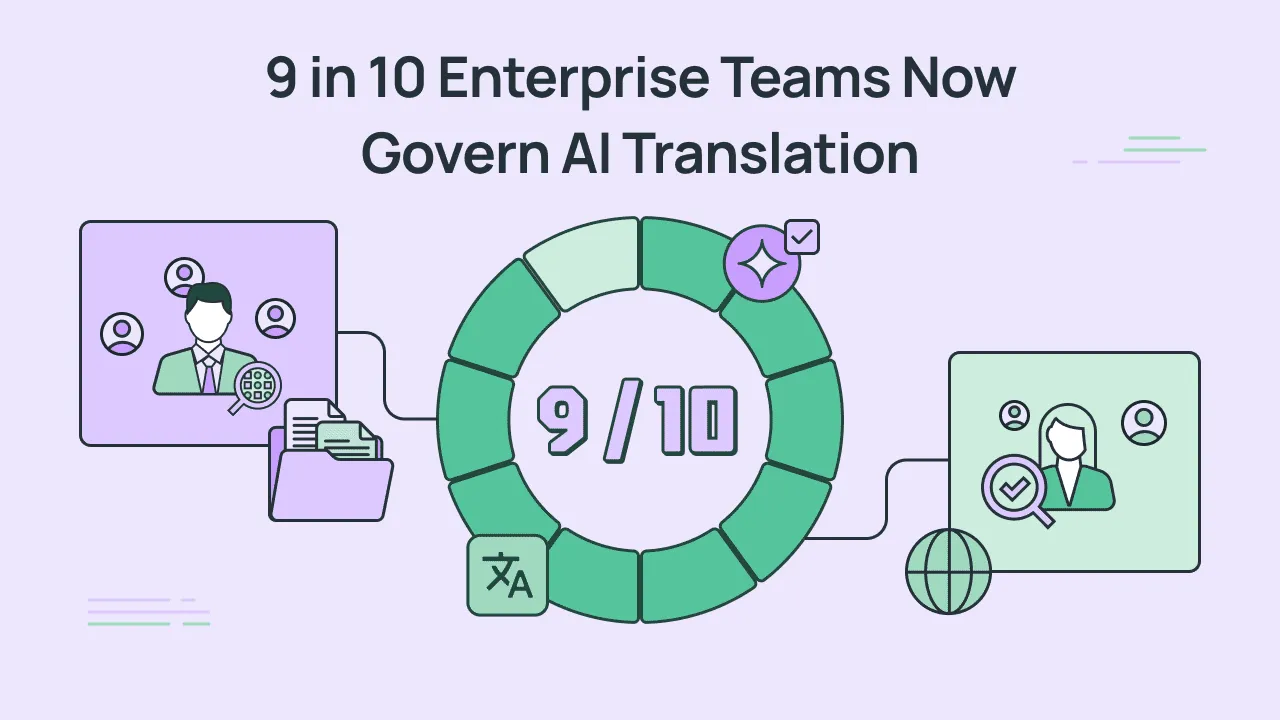

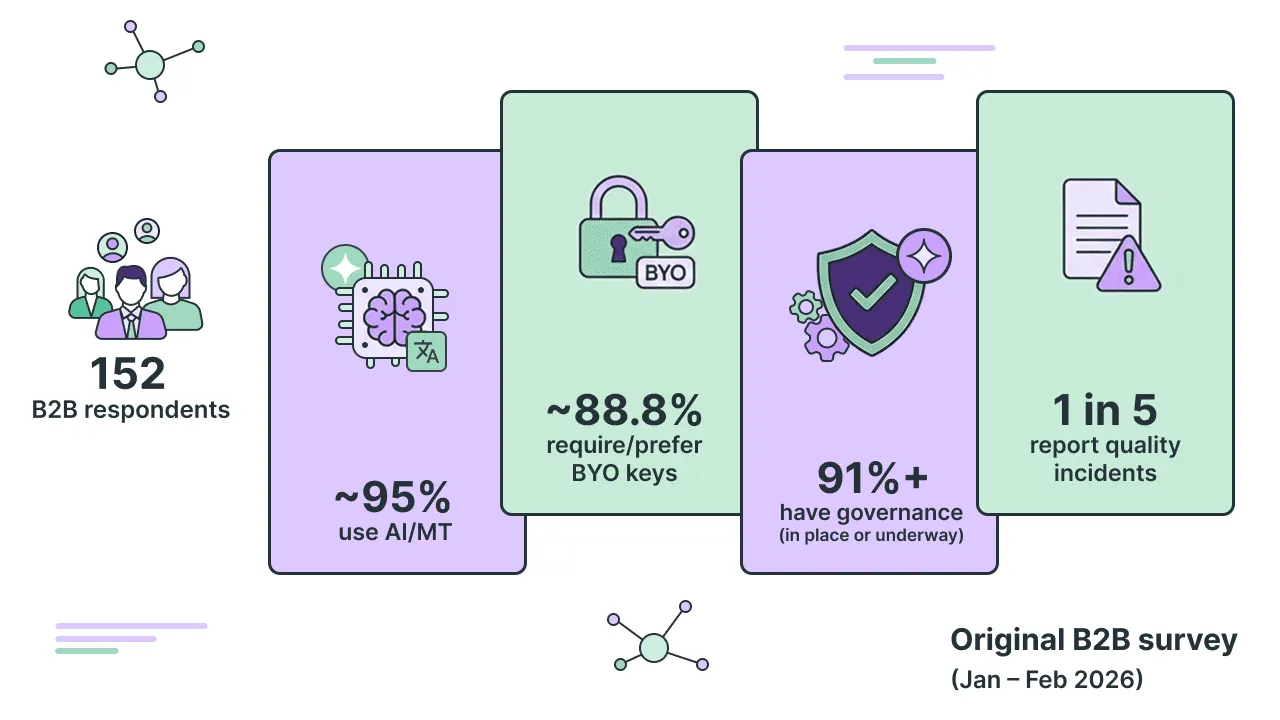

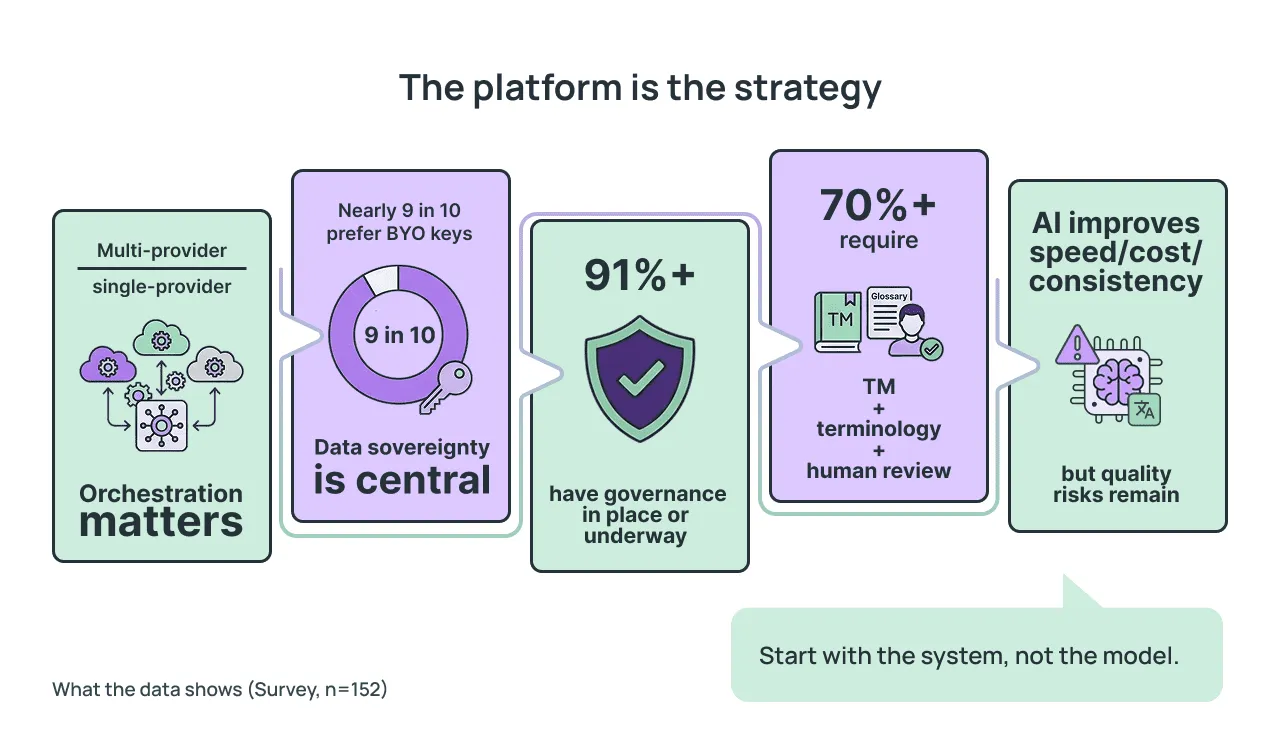

AI translation is no longer an experiment. It has become an operational baseline for enterprise teams managing multilingual content at scale. Based on our original survey of 152 B2B professionals across the US and Canada, roughly 95% of respondents already use AI or machine translation in some capacity – with nearly half doing so frequently and about 18% using it for every translation task. Nearly 9 in 10 require or prefer bring-your-own API keys. Over 91% already have governance frameworks in place or underway. And 1 in 5 report quality incidents since introducing AI translation.

But adoption alone tells only part of the story. What matters now is how companies implement AI translation when the stakes include data security, regulatory compliance, brand consistency, and production reliability. The central finding of this research is clear: enterprise teams do not treat AI translation as a plug-and-play capability. They treat it as a managed process – one that demands governance, quality controls, cross-functional oversight, and platform-level orchestration.

In other words, the industry has moved past the debate over whether AI translation works. The real conversation is about how to make it work safely, predictably, and at scale.

From model selection to system design

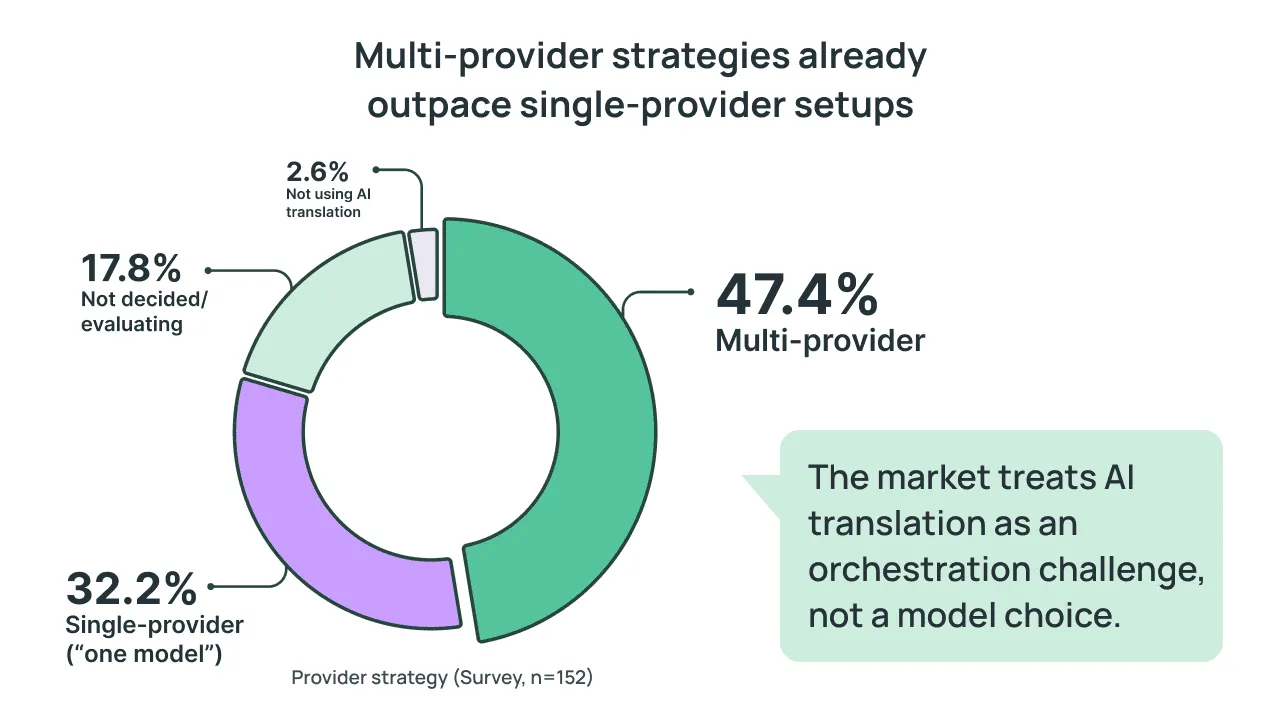

A common assumption in the localization industry is that choosing the right AI model is the critical decision. Our data tells a different story. When asked about their current provider strategy, 47.4% of respondents described a multi-provider setup, using different models depending on the task, language pair, or content type. Another 32.2% rely on a single provider, and 17.8% remain undecided or in evaluation. The remaining 2.6% reported not using AI translation.

"Key Insight: Multi-provider strategies already outpace single-provider setups. This signals that the market views AI translation not as a model selection problem but as an orchestration challenge.

This shift is crucial. When teams use multiple providers, the value of any individual model diminishes relative to the system that selects, routes, evaluates, and governs how those models are used. The platform becomes the differentiator – not the model.

Where AI translation actually happens

Our data confirms that platform-based workflows already dominate. When asked where AI translation happens today, 65.8% of respondents said it occurs inside a TMS (translation management system). Another 34.9% use direct API integration with internal tooling, and 30.3% still use standalone tools like ChatGPT’s interface.

Such distribution shows a hybrid market. Structured, platform-first approaches are leading, yet direct model usage coexists – often as a stopgap or experimentation layer. The direction of travel, however, is unmistakable: the TMS/platform approach is the operational standard for teams that have moved past experimentation.

Security and governance: the non-negotiable foundation

Enterprise AI translation is not a translation quality story. It is a data governance one. Our survey revealed that the most sensitive constraints on AI translation have nothing to do with linguistic accuracy and everything to do with what data teams are willing to expose to external providers.

Data boundaries

Respondents identified the following content categories as too sensitive to send to external AI providers:

- 80.9% – PII / user data

- 78.3% – Legal and contractual content

- 64.5% – Security-related content

- 9.9% – Confidential strategy documents and roadmaps

- 57.9% – Source code

These numbers confirm a fundamental constraint: in enterprise contexts, AI localization operates within data boundaries. The model’s linguistic capability is secondary to the question of what it is and is not allowed to process.

Credential and access control

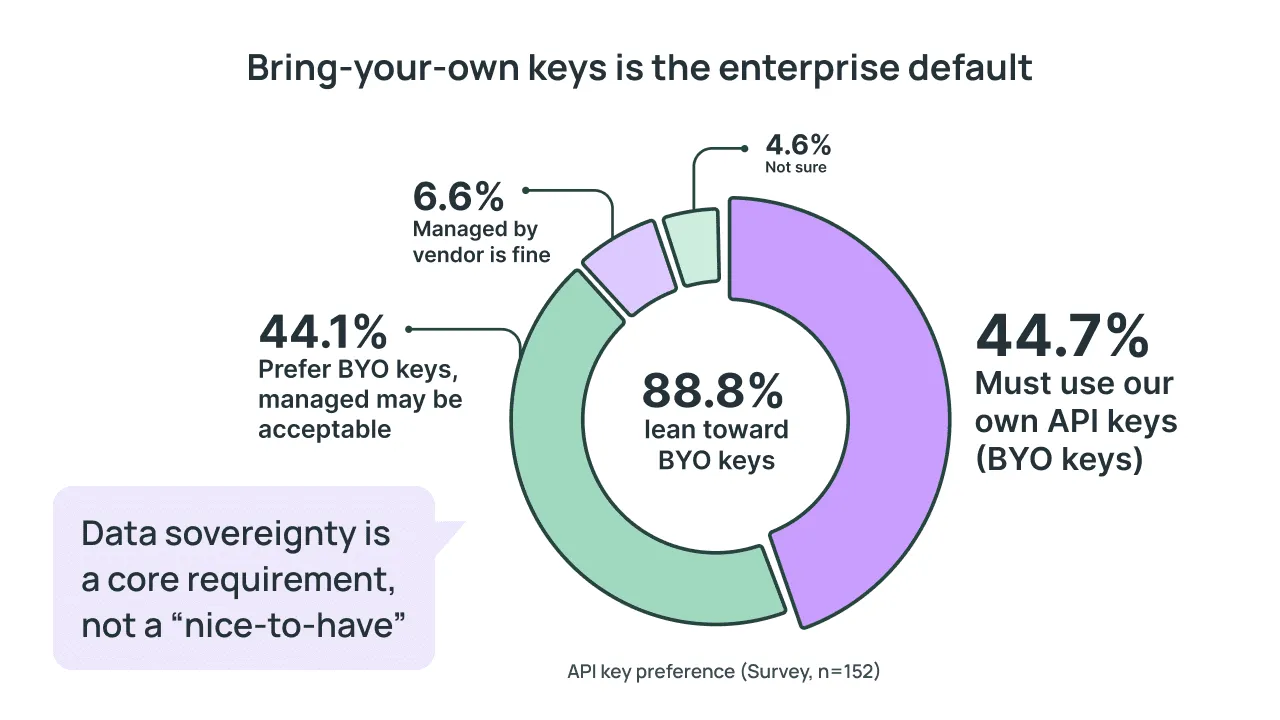

On the question of API key management, the results were equally decisive. Approximately 88.8% of respondents lean toward using their own API keys (BYO keys), either as a strict requirement (44.7%) or as a strong preference (44.1%). Only 6.6% are comfortable with vendor-managed credentials.

"Key Insight: Nearly 9 in 10 enterprise teams require or prefer bring-your-own API keys – a clear signal that data sovereignty is a core purchasing criterion, not a secondary feature.

Governance maturity

Governance is no longer aspirational. Among respondents, 39.5% reported having company-wide AI governance policies, 28.3% have policies for specific teams, and 23.7% are currently building them. Only 6.6% operate without any governance framework.

Taken together, over 91% of organizations already have governance structures in place or underway. The market is formalizing rapidly – this is no longer the early-adoption phase.

Zero Trust: why data security is critical in your TMS

AI translation is a cross-functional decision

One of the most telling findings of the survey concerns who must approve AI translation before it reaches production. The answer is rarely a single team.

- 74.3% – require Localization team approval

- 55.9% – require Engineering team approval

- 52.0% – require Security, Compliance, or Legal sign-off

- 32.9% – require Leadership approval

- 28.9% – require Procurement involvement

Only 9.2% of respondents mentioned no formal approval process exists. For the majority, AI translation is a cross-functional process that spans localization, engineering, security, and executive leadership. This multi-stakeholder reality makes a platform approach not just convenient but a new norm: it provides the shared governance surface where different teams can enforce their requirements simultaneously.

Why teams choose a platform over direct model integration

We asked respondents directly: what motivates the choice of a platform or TMS over plugging in a model through API integration alone? The top reasons form a coherent picture.

- 71.1% – Quality tooling (TM, glossary, context, QA)

- 67.8% – Workflow and integrations (CI/CD, CMS, support tools)

- 67.1% – Governance (SSO, RBAC, audit trails)

- 52.0% – Cost control (usage tracking, quotas)

- 43.4% – Faster rollout with less engineering effort

The takeaway: teams choose a platform not for a single reason but at the intersection of quality, integrations, and governance. No individual model provides this – regardless of how capable its translations are.

What breaks without a platform

When asked what fails most often in model-only setups, respondents pointed to operational gaps rather than abstract AI limitations:

- 58.6% – Missing context (UI strings, screenshots)

- 55.9% – Quality consistency (terminology and brand voice)

- 34.9% – Multi-team coordination

- 32.9% – Deployment automation

- 29.6% – Compliance and security controls

Without a platform layer, what breaks is not AI itself but the operating environment around it – context, consistency, coordination, and deployment. These are infrastructure problems, not model problems.

"The fact that its incorrect solutions are also usually plausible on the surface, making it difficult to catch mistakes.

"We are battling with inconsistencies and hallucinations even with clear instructions given to the tools and models we use.

The quality control stack: AI inside process, not instead of process

One of the clearest signals from the survey is that enterprise teams do not view AI as a replacement for quality processes. They view it as a component within a quality stack. When asked which quality controls are mandatory for production-ready translations, respondents identified:

- 79.6% – Glossary and terminology enforcement

- 75.7% – Human proofreading / LQA

- 73.0% – Translation Memory (TM)

- 68.4% – Automated QA checks

- 61.2% – Style guide and brand voice rules

- 56.6 – In-context review

Less than 1% said minimal or no quality controls were acceptable. The market does not want AI instead of process. Instead, teams are seeking AI inside a quality stack where terminology, memory, style guides, and human judgment all play defined roles.

"You cannot fully rely on the outcome without human proofreaders.

"Content that doesn’t feel human, potentially reducing engagement and reader trust.

This view is consistent with what practitioners share independently. In a discussion on the r/LocalLLaMA subreddit, one user put it plainly: “It’s definitely not a last instance, nor a replacement for professional human review, but it can be handy for QA.”

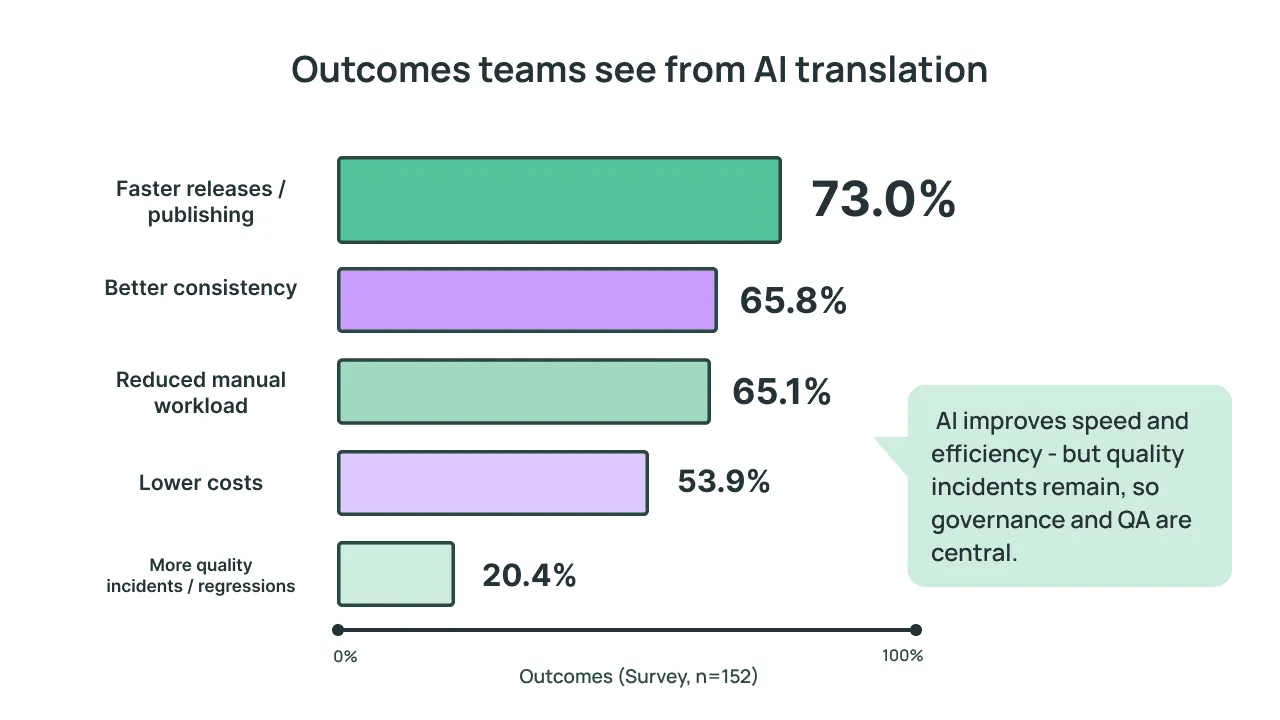

Outcomes: clear value, persistent risks

Enterprise teams already see measurable outcomes from AI translation:

- 73.0% – report faster releases and publishing

- 65.8% – report better consistency

- 65.1% – report reduced manual workload

- 53.9% – report lower costs

At the same time, 20.4% of respondents reported more quality incidents or regressions since introducing AI translation. AI translation delivers clear operational value – speed, workload reduction, consistency, and cost savings – but quality risks remain significant enough that governance and platform controls are not optional but central.

"AI struggles in remembering even short chapters and starts hallucinating quite quickly… results in language models for not-widely-spoken languages still require a lot of corrections.

"“I’d say my biggest concerns are data leakage and confidentiality issues, the drop in quality when teams rely too heavily on AI, and the risk of losing real human expertise and accountability in important content.

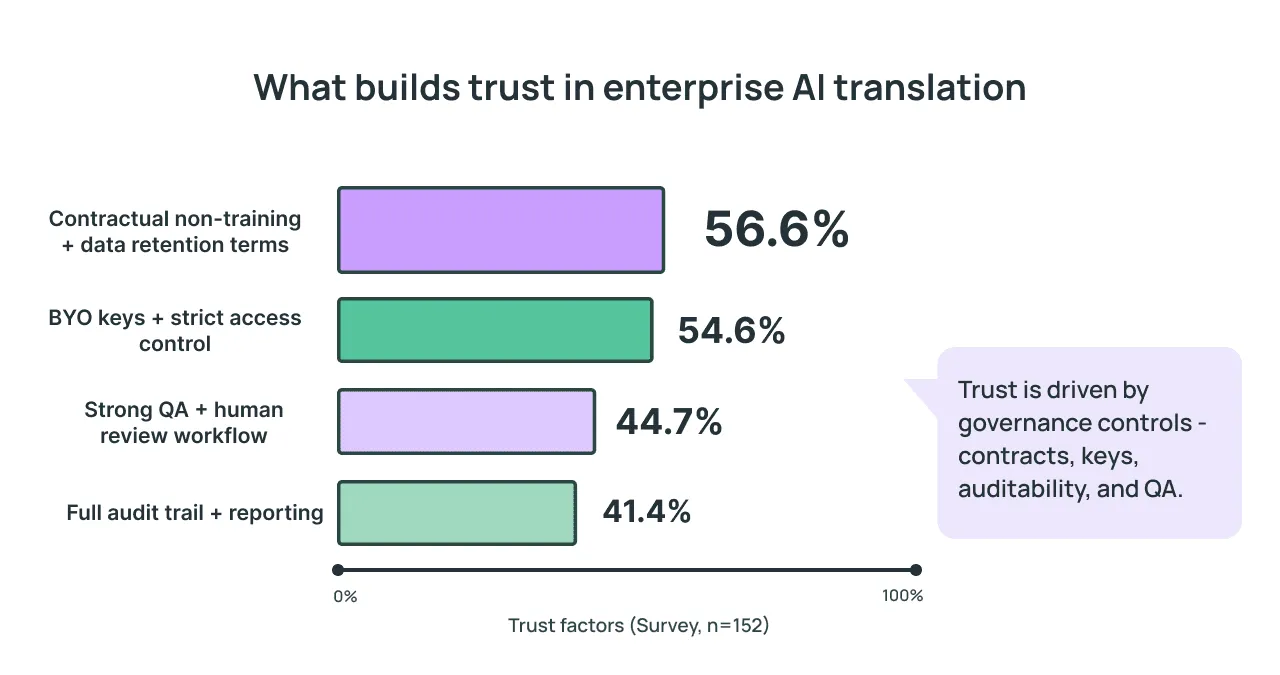

What builds trust in AI translation

The top responses form a practical blueprint on factors that most increase their trust in an AI translation setup:

- 56.6% – Contractual non-training and data retention terms

- 54.6% – BYO keys combined with strict access control

- 44.7% – Strong QA plus human review workflow

- 41.4% – Full audit trail and reporting

"Key Insight: Trust in AI translation is built through contracts, access control, audit trails, and QA workflows – not through marketing claims about model accuracy.

From survey to practice: how companies implement platform-based AI translation

The survey findings are not theoretical. Real companies across industries have already adopted the platform-based approach that respondents describe. Here are several examples that illustrate the pattern.

Suitsupply: AI-first, platform-centered

Suitsupply, the global premium menswear retailer, achieved a fully automated, 100% AI-powered localization workflow across eight target languages. But the critical enabler was not the model itself – it was the platform layer. By integrating Crowdin with Figma for design context and Contentful for CMS publishing, Suitsupply reduced turnaround from two to three working days to just a few hours – a 95% improvement. Translation memory and glossary enforcement ensured brand consistency, while visual QA in the design context addressed the very pain point that 58.6% of survey respondents identified: missing context.

Strava: speed through orchestration

Strava, the global fitness platform used across 185 countries, prioritized integrations above all else when building its localization infrastructure. With more than 25 repositories and tools across its ecosystem, the company built its globalization stack in under six weeks using Crowdin as the central orchestration layer. AI and machine translation run through the platform, with NMT and LLM providers connected via Crowdin’s orchestration capabilities. The result: Runna, an acquired running coach app, was localized and launched globally using a repeatable, automated setup – exactly the kind of platform-driven velocity our survey respondents prioritize.

MyHeritage: multi-provider at scale

MyHeritage, a Crowdin customer, serves users in 50 languages and operates a multi-provider AI/MT stack that includes DeepL, Google Translate, Gemini, and OpenAI – with language-specific prompts tailored to different language families. Their multi-step workflow – source review, TM check, AI translation, automated checks, AI proofreading, and final QA – demonstrates the layered quality control that 79.6% of our survey respondents consider mandatory. Community experts and translators handle nuance and domain-specific terminology, reinforcing the human-in-the-loop requirement that 75.7% of respondents identified as essential.

Wildlife Studios: enterprise-scale orchestration

Wildlife Studios, a Crowdin customer, is one of the leading mobile game developers based in Brazil, publishing games for a global audience. The company manages 27 localization projects across more than 12 languages, combining agency translators, in-house teams, and quality coordinators. Crowdin AI handles draft translation, while the broader system – API integration, versioning, analytics, Translation Memory, and Zendesk integration for support content – provides the operational infrastructure. The results are measurable: a 25% increase in players in one market and a 30% increase in resolved support requests through localized content.

Polhus: human review as standard

Polhus, a Scandinavian e-commerce company, used AI pre-translation through Crowdin to process content across European markets. Although 75% of translations were publication-ready without edits, 100% were reviewed by human editors – demonstrating that even high-quality AI output benefits from systematic human oversight. Glossary enforcement and DatoCMS integration completed the managed workflow, resulting in approximately $80,000 in cost savings.

Conclusion: the platform is the strategy

This finding aligns with broader industry signals. Slator’s roundup of the most-discussed language industry topics of 2025 points to the same shift: the conversation has moved from whether to use AI translation to how to implement it safely, with quality, privacy, and governance moving to the foreground. Similarly, practitioners interviewed by Multilingual describe the most effective localization setups as hybrid workflows – machine translation for speed, human review for quality and cultural fit – coordinated across product, marketing, legal, and compliance teams. Both sources independently reinforce what our survey data shows at the enterprise level.

The data is unambiguous:

- Multi-provider setups outnumber single-provider approaches, confirming that the platform’s orchestration role matters more than any individual model.

- Nearly 9 in 10 teams require or prefer bring-your-own API keys, placing data sovereignty at the center of every purchasing decision.

- Over 91% of organizations have governance frameworks in place or underway – the market is formalizing, not experimenting.

- More than 70% consider terminology enforcement, human review, and Translation Memory to be mandatory quality controls.

- AI delivers measurable value in speed, cost, and consistency – but quality risks remain, making governance and platform controls essential rather than optional.

For teams trying to understand how to implement AI translation, the evidence points in one direction: start with the system, not the model. This means defining your data boundaries, establishing governance, building quality controls into the workflow, and choosing a platform that can orchestrate multiple providers while keeping every stakeholder – localization, engineering, security, and leadership – aligned.

The model matters. But the platform is the strategy.

Methodology

This research is based on a primary B2B study conducted by Crowdin in partnership with ESADigital and ITitov Agency between January and February 2026. Data was collected through a multi-channel outreach strategy, including targeted email campaigns, LinkedIn professional networks, and dedicated industry survey platforms to ensure a high-quality, verified respondent pool.

The sample comprises 152 professionals across the US and Canada, spanning localization and translation (50.7%), engineering and development (17.8%), product and content operations (15.8%), security and compliance (7.2%), and procurement (4.6%). Respondents represent a diverse range of organization scales, from agile startups (23.7% with 1–50 employees) to global enterprises (16.4% with 10,000+ employees), with significant representation from mid-market and enterprise organizations.

Key industry sectors include SaaS/Software (42.8%), E-commerce/Retail (13.8%), Gaming (12.5%), and Fintech (9.2%). The study was specifically designed to evaluate enterprise-grade requirements for secure, governed AI translation workflows and platform-level orchestration.

"Enterprise teams aren’t looking for the best AI model. They’re looking for a way to govern multiple models, control what data gets exposed, and ensure consistent output across every language and every team. That’s a platform problem, not a model problem.

Localize your product with Crowdin